Can an AI Learn the Art of Valet Parking?

Published:

Valet parking is a classic test of human multitasking. It’s not just about driving; it’s a high-pressure logistical puzzle that combines spatial reasoning, memory, and strategic decision-making against a ticking clock. This complexity makes it a fascinating and challenging problem to solve with Artificial Intelligence.

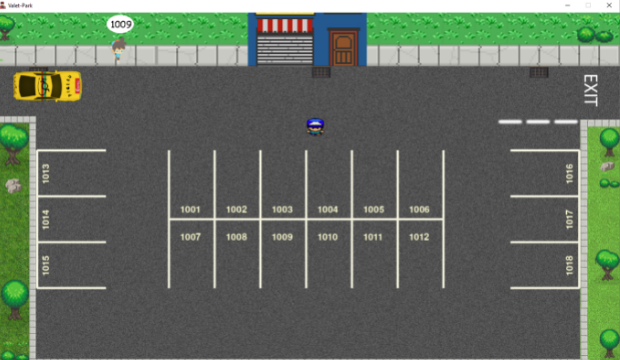

This project explores that challenge by teaching a Reinforcement Learning (RL) agent to work as a valet in a custom-built 2D environment called “Valet-Park.”

The Environment: Welcome to Valet-Park The environment, inspired by classic top-down arcade games, is designed to test the core skills of a valet:

The Task: Customers arrive at the entrance, drop off their car, and declare their desired parking spot number. The agent must take the car, park it in the correct spot, and later retrieve it when the customer returns to the exit.

- The Challenge: Success isn’t just about parking cars. The agent is penalized for real-world mistakes:

- Collisions: Hitting other cars or obstacles.

- Blocking Traffic: Leaving cars at the entrance for too long.

- Poor Service: Making customers wait at the exit.

- The Memory Component: To succeed, the agent must remember which car belongs to which customer, as customers are unique, but their car models may be identical.

The Core Question: Can an Agent Learn Human-like Heuristics?

What makes this project truly exciting isn’t just whether the agent can learn to park cars, but how it learns to solve the problem under pressure.

Think about how a human valet adapts. When things are slow, they follow the rules, parking each car in its assigned spot. But when the lot is busy and time is running out, they switch strategies. They might start ignoring the assigned numbers, memorizing the customer’s face and their car instead, and parking the car in the closest available spot to the exit for a quick retrieval.

This project aims to see if an RL agent can discover similar, more abstract strategies. We’ll test this by introducing specific constraints:

- Time Pressure: By drastically reducing the game time, will the agent abandon the “assigned spot” rule and invent a new, more efficient strategy?

- Memory vs. Logic: By increasing the number of identical car models, we force the agent to rely less on the car’s appearance and more on either memorizing the customer or strictly adhering to the parking spot numbers.

Will the agent learn to “bend the rules” like a human? Or will it discover a completely different, non-human approach to optimization?

Next Steps A prototype has been built in Pygame to prove the concept. The next phase of the project involves porting the environment to Unity, which will provide a more robust physics engine and streamlined integration with modern RL frameworks. By progressively increasing the difficulty with new layouts and obstacles, we can continue to explore the fascinating and often surprising ways that AI agents learn to solve complex, human-centric tasks.